While math and design may seem like uncommon partners, our philosophy at Seer is to always use as much data as possible to drive design decisions—especially when it comes to A/B testing.

A data driven approach to A/B testing means preparing for success by 1) anticipating potential hurdles and 2) ensuring that you have definitive benchmarks to measure results.

One thing to stress about this approach is that it can be useful regardless of A/B testing platform; although if you're using Google Optimize, check out our Installation Instructions, AdWords Integration, and Optimize vs. Optimize 360 articles. These tips should apply for any area or way you want to test!

A question you may be asking is why be data driven at all? You can just go off of your gut and you’ll be fine, right?

The easiest way to summarize this is that to make an A/B test you must determine the hypothesis you are going to test. And that hypothesis should ideally be informed by the data that you gather, not retrofitted with the data to back it up (you run a serious risk of confirmation bias here!). It would be beneficial to have as much (useful) data as possible to form that unbiased hypothesis. By combining the quantitative (GA) with the qualitative (Hotjar) as well as the expertise of the page’s purpose and design, you can see powerful results.

Data Driven A/B Testing Process

Pre-Test Preparation

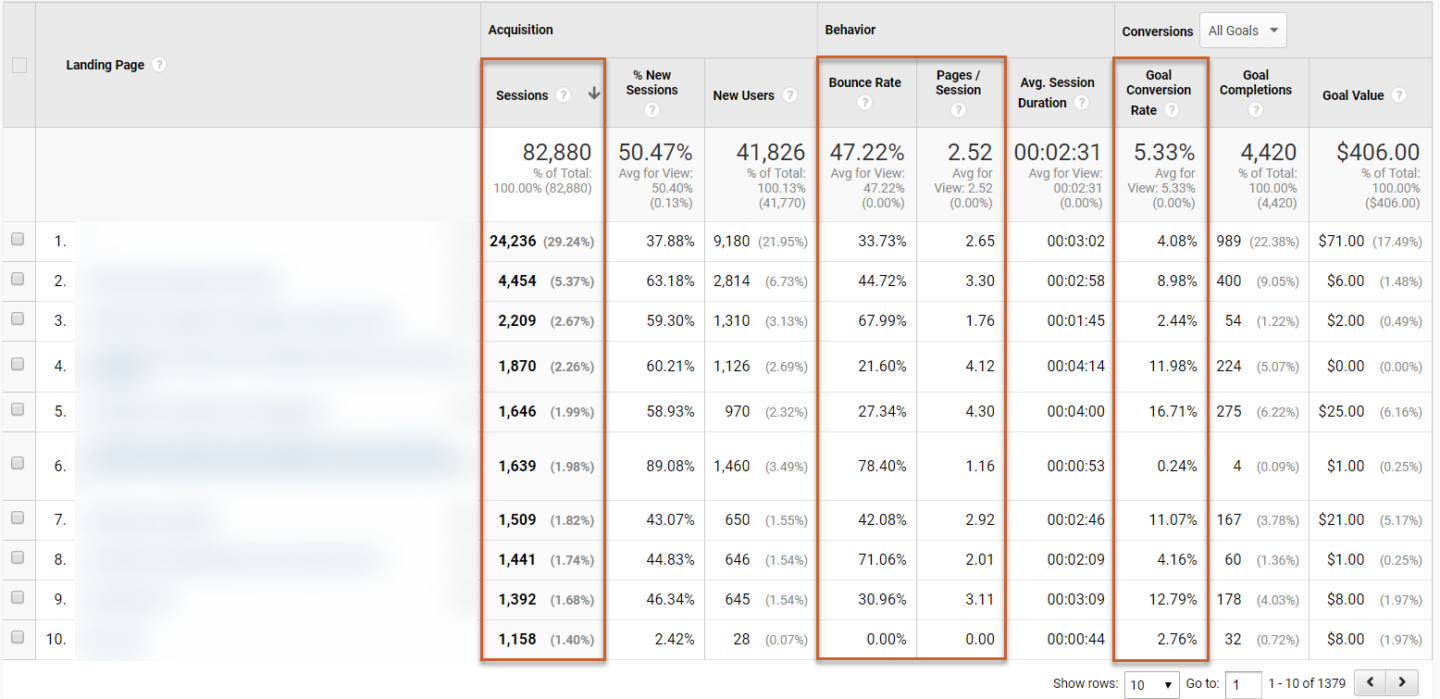

So, what do you need to do to be data driven? The first critical step is doing an examination of your pages in Google Analytics. This can be as simple as pulling a list of top 25 landing pages, and seeing the levels of traffic, engagement metrics (bounce rate, pages / session, etc.), and conversion rates.

Ask the following critical questions about what your test before you begin:

- Do I have enough traffic for my test to be statistically significant?

- Are visitors engaged on this page or not (i.e. bounce rates or pages / session above or below site rate)?

- Are visitors taking the action we want them to take (i.e. conversion rate above or below site rate)?

On that final point, an easier way to simplify this is to ask: is it immediately clear what your page is trying to accomplish so that it converts visitors coming to your site? Would a relative with no knowledge of your business be able to come to this page and describe what they should do? If you are struggling to get to this, you can use a tool like User Testing for a small fee to get real human insight in real-time on your pages.

Testing Tools

Another important aspect to keep in mind with this is that you shouldn’t rely just on GA (or another analytics tool) for your quantitative data. Using something like HotJar or CrazyEgg to gain additional clickmap, scrollmap, visitor recording and polling data are absolutely critical to fully understanding your audience, their desires, and if your planned testing area is meeting them. A deep understanding of UX Best Practices is also critical to inform a winning test variation.

If you don’t have the internal resources to fully prepare for this from a design perspective, Seer has a data driven design team that drives results.

Testing Evaluation

Once you’ve selected what tool(s) you’d like to use to gather data for your tests, let’s make an evaluation of what to test. This can be done in two ways:

- An evaluation of all possible areas, narrowing in on areas with high traffic but poor engagement and conversion results.

- An evaluation of a subset of pages that are important to the business, with the same criteria as #1.

The second option is always preferable if possible, for the following reasons:

- You can gain even more useful information by chatting internally with those who have experience with this page - how was it designed (and the original intent/goal of the page), how do they feel about its current state, what would be their wishlist for updates, etc.

- If you can prove results for an area like this, this is instant way to get attention from a CMO or higher on why A/B testing (and data driven design) is important, and why it should be continued further. Show those bottom-line results on critical site areas!

Once you’ve decided on the area that you want to test, gather as much information as you can on this area, and breakout your plan in an easy way for someone to digest.

Pre-Test Hypothesis Development

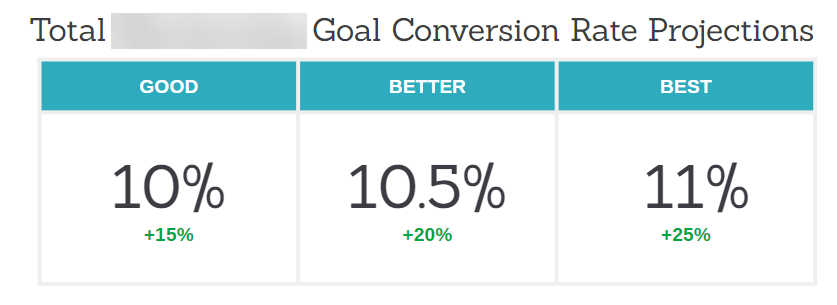

Presenting your plan for testing, the data to back it up, and more will be an easy way to get buy in. Without this, it’s almost like a “let’s test this I guess?” scenario, which is way more easy for someone to say no to then when you have backed up your hypothesis and plan. Ideally your presentation should include information on:

- Why are we testing this page?

- What are we seeing from converting visitors behavior on this page now?

- What is our hypothesis?

- What data driven changes do we recommend based on that hypothesis?

- What impact to we expect?

Once you’ve actually received buy-in on your test, it is off to set-up the test itself. You should set outcomes and success metrics before you start your test. This will allow you to show whether you test succeeded or not, and easily pivot in either scenario.

Post-Test Results & Analysis

Post-Test Results & Analysis

Once your test has run and you’ve gathered statistical significance against the Control variation (i.e. the original version of the page), you can end your test. From this point, you should update your presentation to show the results of the test, and take the following route:

- If successful (based on your previously set benchmarks and versus the results from the Control), move to permanently update the page.

- If unsuccessful, analyze results to identify any key learning / takeaways to inform future optimization.

Two critical things to remember about these paths:

- Tests, even when unsuccessful, are NEVER a waste of time. You’ve still learned what hasn’t worked and narrowed in on areas to test in the future. That’s success by process of elimination!

- Testing is not a one-off process - you haven’t perfected an area even if it is successful. Testing is an iterative process, one successful test means “this worked, what’s next?”

Conclusion & Summary

Now, with this process broken out, what will you test first? What data sources will you use to form your hypothesis? What customers or internal stakeholders could you talk to in order to form a better hypothesis? Who would you present your pitch to in order to get buy-in? How would you share you results after the test?

All of these are questions you should ask yourself before you start.

With a clear understanding of the data behind these areas and the designs that can convert visitors, you’ve set yourself up for success in A/B testing. The symmetry between your data and your design can lead to a truly beautiful outcome.

Additional Resources

- The Most Basic Framework for Developing an A/B Test Hypothesis

- UX Checklist Series: Landing Page Design

- Google Optimize 360 vs Optimize: What You Need To Know

- Introducing Personalization in Google Optimize

- PPC Landing Page Testing Using Optimize & Google Ads Integration