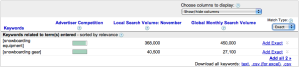

Im hoping one of our readers can clarify an issue for both myself and the rest of the SEER team. One of the tools that we often use for our keyword research is Googles Keyword Tool. Recently, we noticed that this tool says the phrase snowboarding equipment has much higher search volume than the phrase snowboarding gear:

This trend holds for all 3 match types (broad, phrase, and exact).

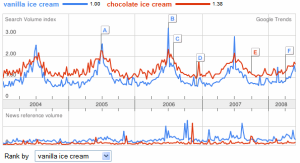

However, when I look at either Google Trends or Google Insights, I see a different trend the lines for snowboarding gear are higher than snowboarding equipment:

So the question is which tool is correct? Which phrase snowboarding gear or snowboarding equipment has more search volume? Ive personally spent a lot of time reading the Help for Trends and Insights, and I do understand that the graphs in Trends and Insights do not show actual volumes. I understand that the data has instead been normalized and scaled. However, I am not comfortable concluding that the discrepancy is due to normalization and scaling after looking at another example provided by Google itself.

In a 2008 blog post about Google Trends, the Google team talks about using trends to compare two phrases, vanilla ice cream and chocolate ice cream:

Quote: As the numbers on the top of the graph indicate, vanilla ice cream has about 30 percent less search traffic than chocolate ice cream.

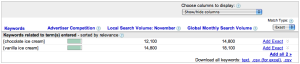

After reading this statement, I started thinking that Google is saying it is OK to use Trends or Insights to make inferences about relative search volumes. However, this is what Googles Keyword tool says about the volumes for vanilla ice cream and chocolate ice cream:

Here, vanilla ice cream seems to have higher search volume than chocolate ice cream. Again, this general trend holds for all 3 match types. If you were making decisions for your business, would you believe Google Trends and assume that "chocolate ice cream" is more popular than "vanilla ice cream" or would you believe the Keyword Tool and assume that "vanilla ice cream" is more popular?

Has anyone else noticed these types of discrepancies? Which tool do you (or would you) trust to make inferences about search volumes? Does anyone have any explanations for how several tools from Google can suggest conflicting conclusions?