After seeing a post several months ago on the seomoz blog, I wanted to benchmark more potentially low quality sites to see how Google treated them. Data brewed for about five months and now, lets look at some interesting takeaways below.

The Websites: We're starting with 844 article directory type submission websites. These sites had to be previously/currently open to submissions. Pages were indexed over the past 5 months.

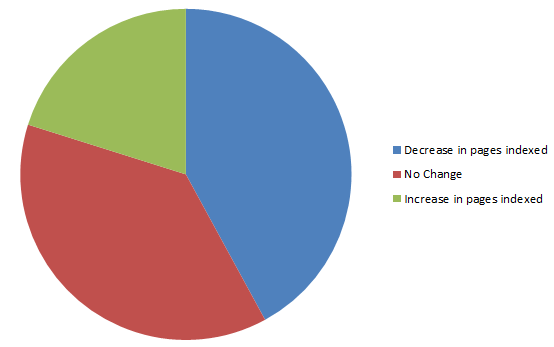

Pages Indexed On The Decline:

Out of the 844 websites tracked, 355 (42%) had less pages indexed compared to 5 months ago. 170 had an increase in pages indexed (20%) and 319 remained unchanged. We'll dive deeper into the increase and decrease, but I wanted to clarify on the number of page that remained unchanged. Included in the 844 total sites were a large number that were already deindexed, 337 to be exact. I wanted to include these sites so we could analyze how Google is treating sites dropped from their index 5 months later.

We found the following information:

The number of deindexed sites rose from 337 to 342. That seems like a small increase, but we found that there were 44 new sites deindexed and 39 sites that started getting indexed once again. Of the 39 sites that started getting indexed again, we found that only 8 of them had more than 10 pages indexed.

This leads me to a few conclusions:

1. Google is letting sites that were deindexed back in, but it is few (just over 10% in this study) and far between. (Help for Panda) (Insights for Penguin) 2. When Google let these sites back in, the majority were only able to get the homepage and a few others pages indexed. Google is taking time to trust sites it once dropped from the index. 3. Google is still actively removing low quality sites from their index. 5.2% of the sites we reviewed, but that percentage is actually higher (8.7%) since 337 sites were already deindexed.

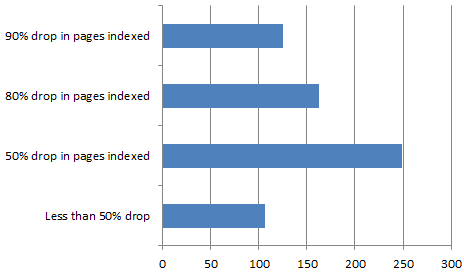

Lets look into the increases & decreases

355 sites had a drop in pages indexed. Of those, 249 (70%) had their pages indexed cut in half or more. 163 (46%) sites had their pages indexed cut by 80% or more. 125 (35%) had their pages cut by 90% or more. These are some really large page indexation drops that can conclude a few things.

1. Google doesn't use a fine toothed comb when choosing to drop pages from their index, it uses a machete. 2. 44 of the 125 were 100% drops (deindexed). Many sites won't be able to switch over to profitable PPC or other sources of traffic fast enough while they wait/scramble for Google to reinclude them.

BUT THERE WERE INCREASED PAGED INDEXED IN LOW QUALITY SITES...

Out of the 844 total sites reviewed, 170 increased in total pages indexed. Google wanted to invest more time with their crawlers on certain sites & deemed a great amount of their pages good enough for the index. That seems to be somewhat of a silver lining for owners of these types of sites. Here's the bubble burster:

Sites with an increase: 85 (50%) gained fewer than 100 pages Sites with a decrease: 217 (61%) lost more than 100 pages

I'm not going to break down into too much significance, but this shines some light into the idea that Google is more likely to cut pages than to give them back.

Taking the median number seemed to be the best way of finding some type of average.

Sites with a drop, median # of pages deindexed: 601 Sites with a gain, median # of pages added to the index: 140

Google is not letting up in their assault on poor quality websites. This short study looks at article directory type sites, but we're seeing this for almost all low quality sites across the industry. With Google's update over the weekend against low quality exact match domains (great initial look from Dr Pete), more studies will continue to show that what worked great a few years back can't be applied to find success today.

Quick shout to Ethan Lyon (is @seomadscientist taken yet?) for helping me pull updated numbers faster.

Check out our case studies page on seerinteractive.com for more examples on how SEER advances companies in search results through strategies that provide value.